Projects

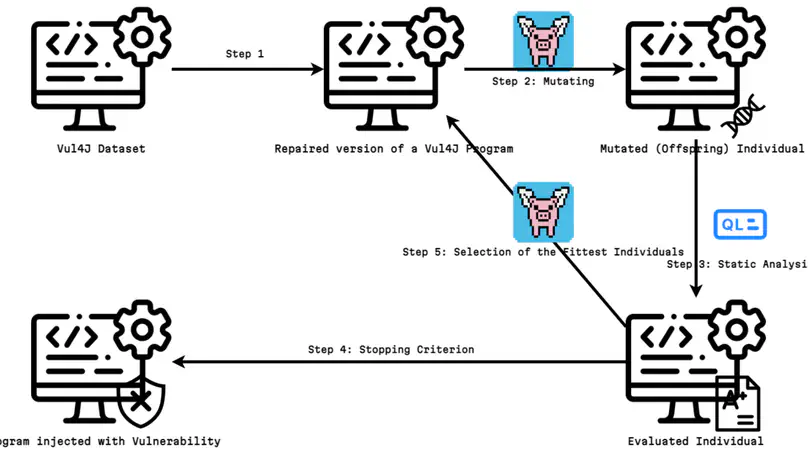

This thesis explores the idea of applying genetic improvement in the aim of injecting vulnerabilities into programs. Generating vulnerabilities automatically in this manner would allow creating datasets of vulnerable programs. This would, in turn, help training machine-learning models to detect vulnerabilities more efficiently. This idea was put to the test by implementing VulGr, a modified version of the framework dedicated to genetic improvement named PyGGi. VulGr itself uses CodeQL, a static code analyser, offering a new approach to statical detection of vulnerabilities. VulGr’s end goal was to use CodeQL to inject vulnerabilities into programs of the Vul4J dataset. This experiment proved unsuccessful, CodeQL lacking accuracy and being too time-consuming to produce concrete results in an acceptable time span (less than 72 hours). However, the general approach and VulGr still retain their relevancy for future uses as CodeQL is an ongoing community effort promising new updates fixing the issues mentioned.

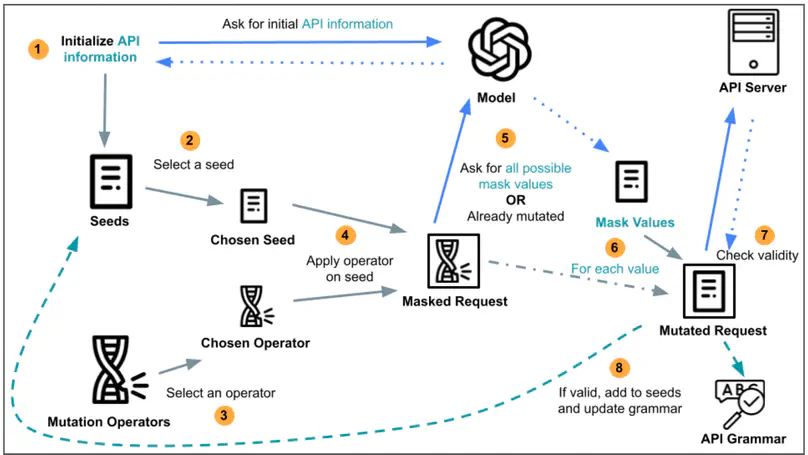

Application Programming Interfaces, known as APIs, are increasingly popular in modern web applications. With APIs, users around the world are able to access a plethora of data contained in numerous server databases. To understand the workings of an API, a formal documentation is required. This documentation is also required by API testing tools, aimed at improving the reliability of APIs. However, as writing API documentations can be time-consuming, API developers often overlook the process, resulting in unavailable, incomplete or informal API documentations. Recent Large Language Model technologies such as ChatGPT have displayed exceptionally efficient capabilities at automating tasks, disposing of data trained on billions of resources across the web. Thus, such capabilities could be utilized for the purpose of generating API documentations. Therefore, the Master’s Thesis proposes the first approach Leveraging Large Language Models to Automatically Infer RESTful API Specifications. Preliminary strategies are explored, leading to the implementation of a tool entitled MutGPT. The intent of MutGPT is to discover API features by generating and modifying valid API requests, with the help of Large Language Models. Experimental results demonstrate that MutGPT is capable of sufficiently inferring the specification of the tested APIs, with an average route discovery rate of 82.49% and an average parameter discovery rate of 75.10%. Additionally, MutGPT was capable of discovering 2 undocumented and valid routes of a tested API, which has been confirmed by the relevant developers. Overall, this Master’s Thesis uncovers 2 new contributions:

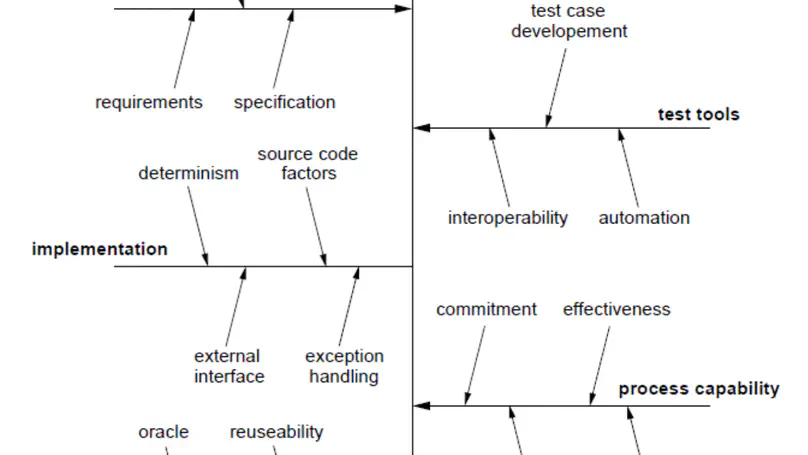

Code Smells have been studied for more than 20 years now. They are used to describe a design aw in a program intuitively. In this study, we wish to identify the impact of some of these Code Smells. And, more specifically, their potential impact on Testability. To do this, we will study the state of the research on both Code Smells and Testability. Using those studies, we will define a scope of parameters to dene the two concepts. With that information, we will analyse the statistical distribution of our samples and try to understand the relationship between Code Smells and Testability in a corpus of Java projects.

The CyberExcellence project started in January 2022 under the umbrella of the CyberWal initiative and is funded by the Walloon region. It aims to position Wallonia as a major player in cybersecurity on the national and international map by developing a core framework allowing the implementation of solutions based on practical and thoughtful cybersecurity with a competitive advantage.

This project aims at developing a holistic approach to socio-technical debt by designing a framework for helping developers and managers to address software debt and prioritize mitigating actions in an industrial context.

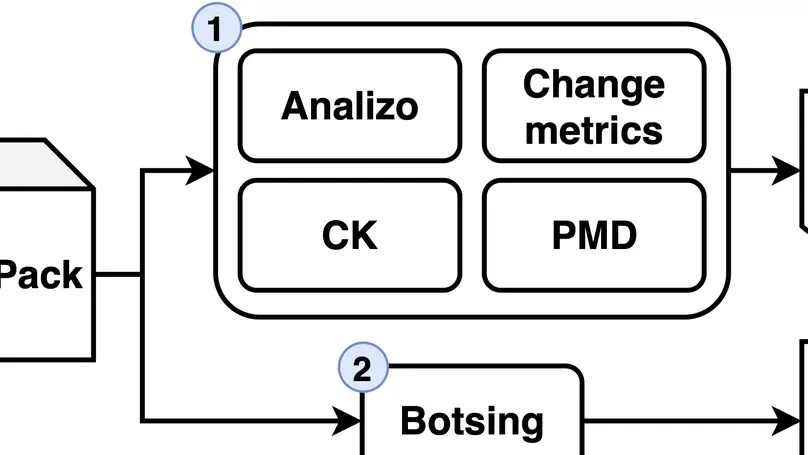

This master thesis project, revisits the links between search-based crash reproduction and software quality metrics to assess the hardness of search-based crash reproducing test case generation.

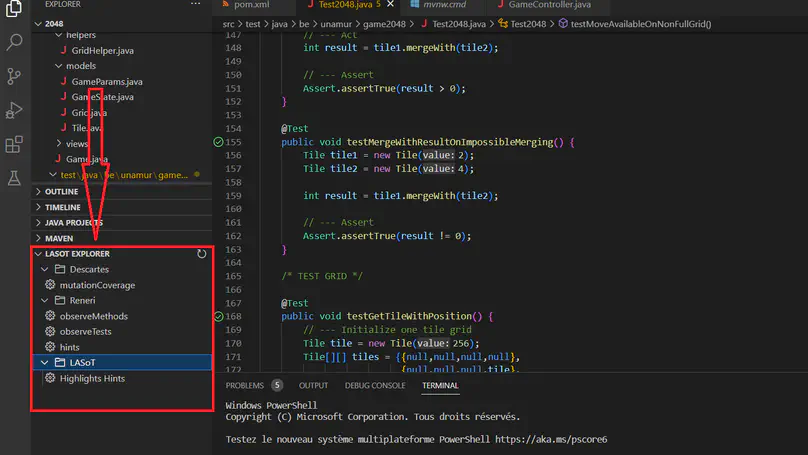

Learning software testing is a neglected subject in computer science courses. Over the years, methods and tools have appeared to provide educational support for this learning. Mutation testing is a technique used to evaluate the effectiveness of test suites. Recently, a variant called extreme mutation testing that reduces computational and time costs has emerged. Descartes, an extreme mutation engine was developed. With the support of a plugin extension called Reneri, Descartes can generate a report providing information to the developer on potential reasons why mutants remain undetected. In this thesis, an extension of Visual Studio Code has been developed in order to incorporate the information generated by Descartes and Reneri. The purpose of the experiment is to assess whether the inclusion of this data can help master’s students improve their test assertions. The results showed that this information integrated into an editor was well received by the students and that it guided them towards a refinement of their suite of tests.

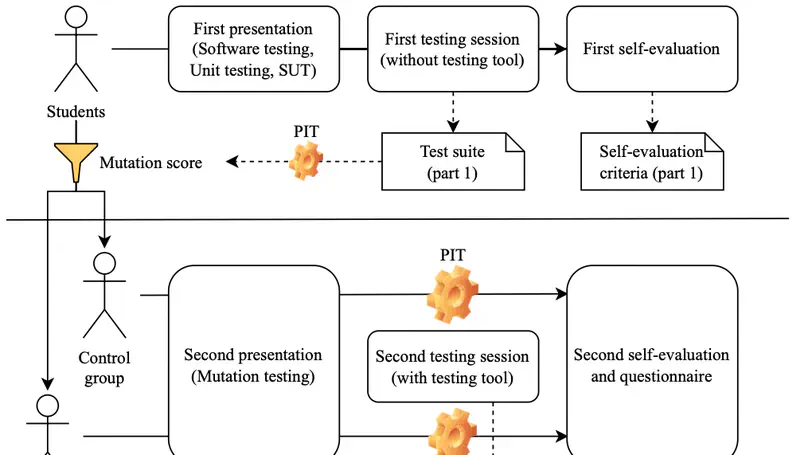

Although software testing is critical in software engineering, studies have shown a significant gap between students’ knowledge of software testing and the industry’s needs, hinting at the need to explore novel approaches to teach software testing. Among them, classical mutation testing has already proven to be effective in helping students. We hypothesise that extreme mutation testing could be more effective by introducing more obvious mutants to kill. In order to study this question, we organised an experiment with two undergraduate classes comparing the usage of two tools, one applying classical mutation testing, and the other one applying extreme mutation testing. The results contradicted our hypothesis. Indeed, students with access to the classic mutation testing tool obtained a better mutation score, while the others seem to have mostly covered more code. Finally, we have published and anonymised the students’ test suites in adherence to best open-science practices, and we have developed guidance based on previous evaluations and our own results.