Projects

Amid growing concern over filter bubbles and content diversity, this thesis explores the impact of feedback mechanisms on user experience with YouTube’s recommendation algorithm. The study examines how increased user control can influence their interactions with the algorithm. Based on user interviews, personas were created to understand user behaviors and expectations. A Chrome extension was developed to allow users to report errors in their recommendation feed. Results indicate that this mechanism enhances user satisfaction and a sense of control, though some limitations suggest areas for future improvements. The study also proposes a methodology to evaluate contextual thematic diversity on YouTube, paving the way for further research into recommendation diversity and self-actualization systems.

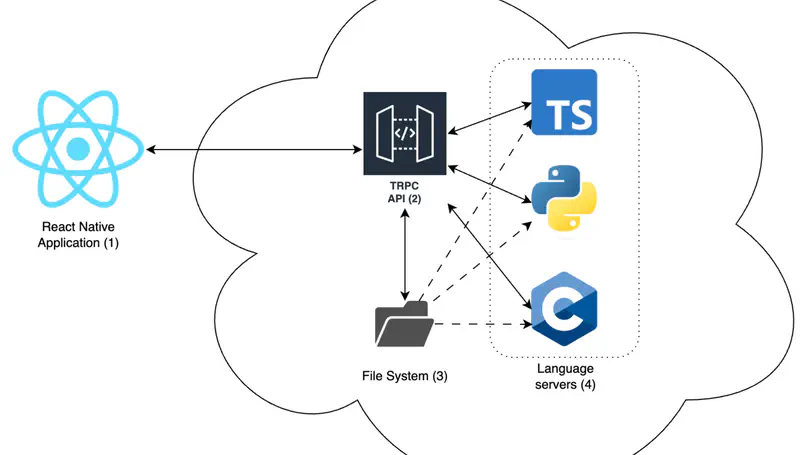

This master thesis investigates how the Language Server Protocol (LSP) can be used to develop a nomad and ergonomic code editor. Mobile devices popularity has significantly increased in the past decade, strengthening the transformation of desktop solutions to mobile ones. However, code editing activities, traditionally carried out on a computer, have not yet found real alternatives to provide a suitable development environment and allow multilanguage support on mobile devices. Previous works focus on finding interaction solution to allow better code editing productivity, mainly adapting the code editor to one single programming language. By integrating the use of language servers through the LSP, we develop new design and interaction solutions to allow multilanguage support in a single mobile code editor. In this thesis, we present a prototype code editor combining interaction solutions found in the literature with LSP functionalities and evaluate it in terms of productivity and usability. This work aims to provide an alternative solution to the traditional desktop development environment on mobile devices, addressing the technological shifts and transforming the way developers may be coding in the future.

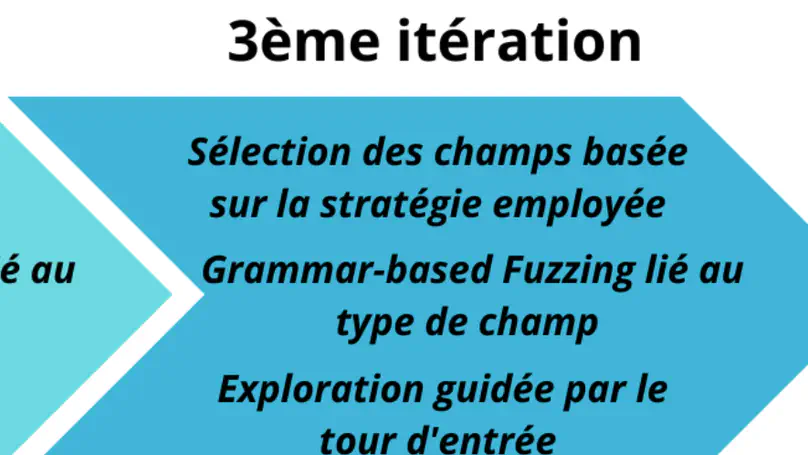

Since many years, Odoo, a company providing business management services, is constantly expanding its scope and developing the complexity of its software, a web application. In response to that complexity, the introduction of automated testing techniques seems to be the next evolution of the testing tools already available to them. In the past, other tools for automatically testing web interfaces have been created, but often with limitations. This thesis explores the techniques that can be applied to implement fuzzing on the Odoo software web interface. It is shown that some methods do not seem applicable at present, while others work very well. A viable method will be proposed and implemented, and different configurations of the method will be evaluated. Ultimately, it will be shown that some weaknesses are present in the proposed method, but that future work in this direction can be done.

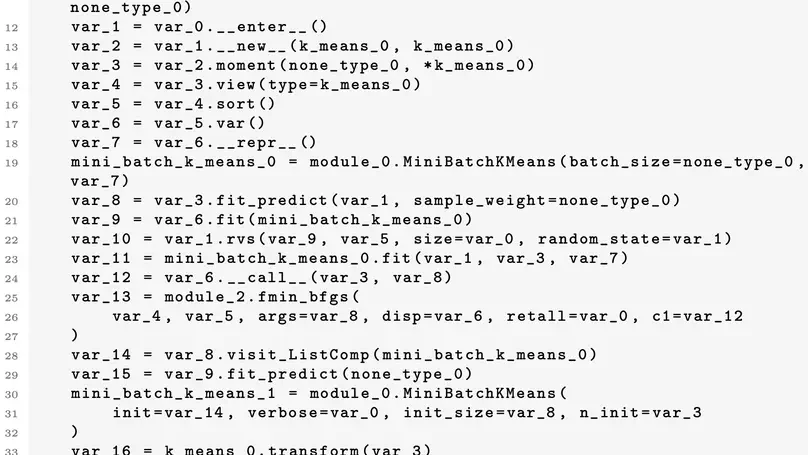

The field of automated test case generation has grown considerably in recent years to reduce software testing costs and find bugs. However, the techniques for automatically generating test cases for machine learning libraries still produce low-quality tests and papers on the subject tend to work in Java, whereas the machine learning community tends to work in Python. Some papers have attempted to explain the causes of these poor-quality tests and to make it possible to generate tests in Python automatically, but they are still fairly recent, and therefore, no study has yet attempted to improve these test cases in Python. In this thesis, we introduce 2 improvements for Pynguin, an automated test case generation tool for Python, to generate better test cases for machine learning libraries using structured input data and to manage better crashes from C-extension modules. Based on a set of 7 modules, we will show that our approach has made it possible to cover lines of code unreachable with the traditional approach and to generate error-revealing test cases. We expect our approach to serve as a starting point for integrating testers’ knowledge of input data of programs more easily into automated test case generation tools and creating tools to find more bugs that cause crashes.

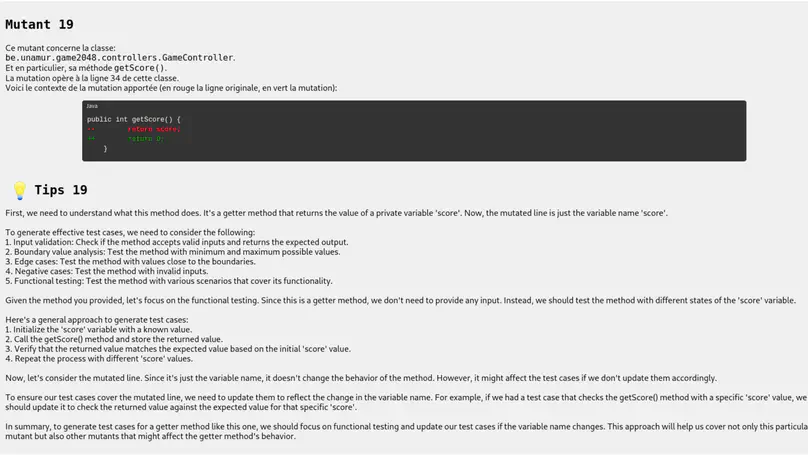

The widespread digitalization of society and the increasing complexity of software make it essential to develop high-quality software testing suites. In recent years, several techniques for learning software testing have been developed, including techniques based on mutation testing. At the same time, the recent performance of language models in both text comprehension and generation, as well as code generation, makes them potential candidates for assisting students in learning how to develop tests. To confirm this, an experiment was carried out with students with little experience in software testing, comparing the results obtained by some students using a report from a classic mutation testing tool and a report augmented with hints generated by a language model. The results seem promising since the augmented reports improved the mutation score and mutant coverage within the group more generally than the other reports. In addition, the augmented reports seem to have been most effective in testing methods for modifying and retrieving private variable values.

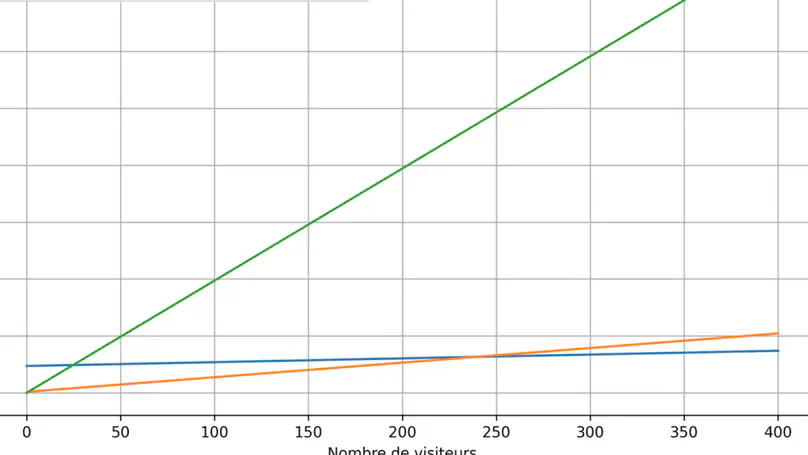

Energy efficiency in computing is an important subject that is increasingly being addressed by researchers and developers. Nowadays, the majority of websites are built using the Wordpress CMS, while other developers prefer to use more secure and energy-efficient site generators. This study focuses on the server-side energy consumption of these two methods of creating websites. A detailed analysis of the results will make it possible to identify borderline cases and suggest recommendations on the best technology to use, depending on the type of project.

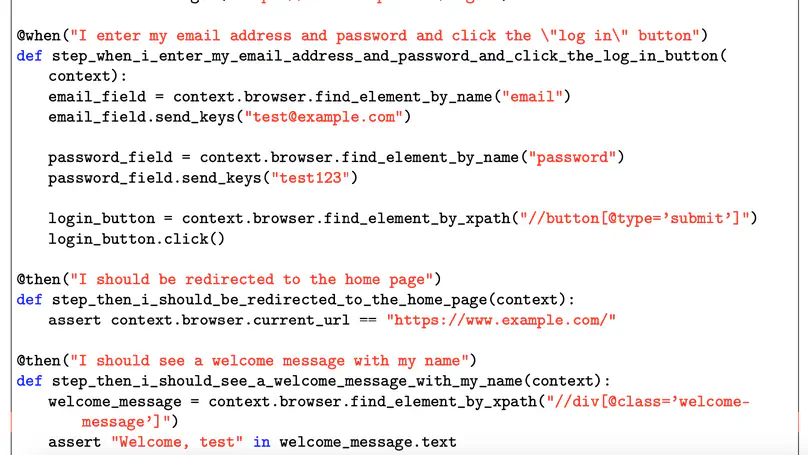

Software development faces persistent challenges in terms of maintainability and efficiency, and this is driving the ongoing search for innovative approaches. Agile methodologies, in particular Behaviour-Driven Development (BDD), have gained ground in society thanks to their ability to promote responsiveness to change and communication between stakeholders. However, as with many methods, the use of BDD can lead to mainte- nance costs and productivity problems. To meet these challenges, this research investigates the adaptation of advanced automatic data generation techniques, in particular SELF-INSTRUCT, to augment BDD datasets.

In recent years, sustainability concerns have emerged in the software engineering community. However, little research focuses on the link between the evolution of a source code and energy consumption. Consequently, most developers don’t know the energy consumption of their software during its development. To address this problem, we want to build a theory that binds the evolution of a source code to its energy consumption profile. This relies on the measurement of the energy consumption evolution of multiple projects using repository mining approaches, the analysis of the reasons for this evolution, the design of proper data visualization to make this information available for the developers, and the evaluation of these objectives through the development of a framework for developers. This theory and the associated framework may help developers have better knowledge of the energy consumption evolution of their software and tools to reduce its consumption.

The Gender Equals Future project, led by the University of Namur and conducted in collaboration with Interface3.Namur and Form@Nam, is part of the Woman In Digital plan of the SPF Economie. More specifically, it studies the influence of teaching methods and environmental factors on girls and women in STEM disciplines and ICT programs. The final objective is to formulate suggestions and recommendations for educational institutions in order to foster access and retention of women in STEM disciplines and promote gender equality.

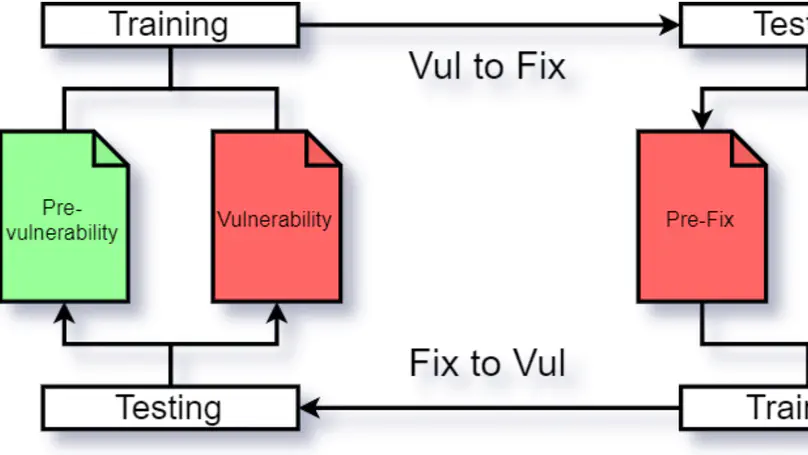

Multiple techniques exist to find vulnerabilities in code, such as static analysis and machine learning. Although machine learning techniques are promising, they need to learn from a large quantity of examples. Since there is not such large quantity of data for vulnerable code, vulnerability injection techniques have been developed to create them. Both vulnerability prediction and injection techniques based on machine learning usually use the same kind of data, thus pairs of vulnerable code, just before the fix, and their fixed version. However, using the fixed version is not realistic, as the vulnerability has been introduced on a different version of the code that may be way different from the fixed version. Therefore, we suggest the use of pairs of code that has introduced the vulnerability and its previous version. Indeed, this is more realistic, but this is only relevant if machine learning techniques can properly learn from it and the patterns learned are significantly different than with the usual method. To make sure of this, we trained vulnerability prediction models for both kind of data and compared their performance. Our analysis showed a model trained on pairs of vulnerable code and their fixed version is unable to predict vulnerabilities from the vulnerability introducing versions. The same goes for the opposite, despite both models are able to properly learn from their data and detect vulnerabilities on similar data. Therefore, we conclude that the use of vulnerability introducing codes for machine learning training is more relevant than the fixed versions.