Projects

Digital citizenship, defined by the Council of Europe as the capacity to participate responsibly in communities through competent and positive engagement with digital technologies, is becoming an increasingly pressing societal issue as our lives continue to shift online. Two-thirds of citizens expressed a desire for more education and training to enhance their insufficient digital competencies. However, current approaches to digital citizenship education exhibit limitations, particularly regarding the scope of the content conveyed, its operationalization for skills acquisition and its ignorance of the pre-established representations of digital technologies. Given these challenges, one promising avenue resides in the use of audiovisual fiction as a vector for fostering digital citizenship. Indeed, recent studies indicate a clear connection between the consumption of fiction (e.g., science fiction movies) and the digital citizenship aspects beyond coding. Furthermore, the use of fiction in classrooms for other purposes than digital citizenship education has a long tradition of established and operationalized practices, such as design fiction with clear evaluation instruments. Lastly, students arrive in the classroom with already pre-established, and possibly skewed through fictional tropes, representations of digital technologies that need to be accounted for.

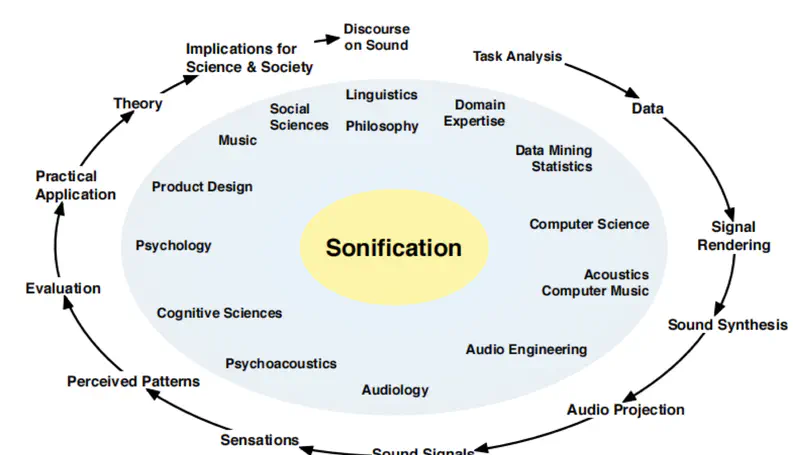

This thesis explores the integration of sonification and gamification in development environments in order to reduce the cognitive load on developers and improve their user experience. A state-of-the-art review was conducted based on scientific literature in various fields such as cognitive load, sonification, and gamification. Subsequently, a tool called EchoCode was developed to address the issue. It is an extension for Visual Studio Code that integrates the concepts of sonification and gamification. This extension offers three main features : personalized audio feedback associated with keyboard shortcuts, audio feedback that adapts according to the execution of a program (failure or success), and an integrated To-Do List with two operating modes to structure tasks in a software development context. This approach aims to relieve the visual channel, support working memory, and maintain optimal concentration.

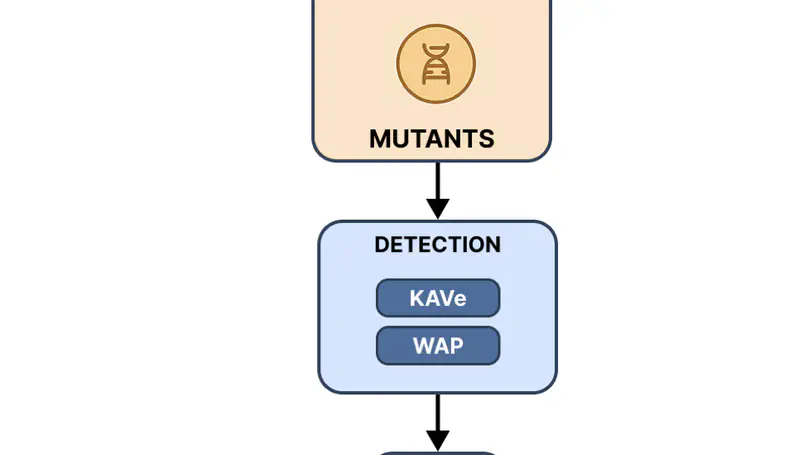

Looking after bugs and vulnerabilities is one of the most important tasks in computer science, especially in the context of web applications. There are many techniques to detect and prevent these issues, one of the most widely used being mutation testing. However, creating mutants manually is a time-consuming and error-prone pro- cess. To address this, we perform a combination of static analysis and an LLM to automatically generate mutants. In this study, we compare the performance of an LLM in producing mutants based on three different static analysis tools: KAVe, WAP, and the LLM itself. Our results show significant variability between tools. Mutants produced using traditional static analysers vary heavily depending on the type of vulnerability, and tend to perform better when tools are combined. When it comes to the LLM, the quality of mutants is more consistent across different vulnerabilities, and the overall code coverage is significantly higher than traditional approaches. On the other hand, LLM-generated mutants have a higher success rate in passing initial verification, but often contain syntactic or semantic errors in the code. These findings suggest that LLMs are a promising addition to automated vulnerability testing workflows, especially when used in conjunction with static analysis tools. However, further refinement is needed to reduce the generation of incorrect or invalid code and to better align with real-world exploitability.

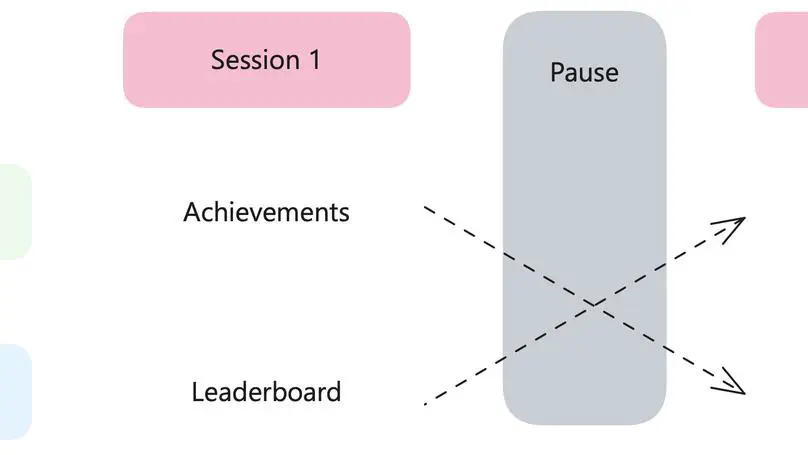

The practice of software testing is struggling to become widespread in the software development industry. This observation also applies to the academic world, where students often devote little energy to this activity, due to lack of motivation or time. To address these issues, this study explores the use of gamification as a lever of engagement in software testing. Following a state-of-the-art review of testing techniques and gamification principles applied to software development, an existing IntelliJ plugin was reused and enhanced. Initially centered on a system of achievements, this plugin was supplemented by a leaderboard, enabling a comparison of these two approaches. Achievements are badges, here represented by trophies, awarded when the user reaches certain levels of progress in the plugin. A leaderboard, on the other hand, is a table that ranks the various participants according to the points they have earned. The aim is to determine which mode favors student involvement, while having better performance in test writing.

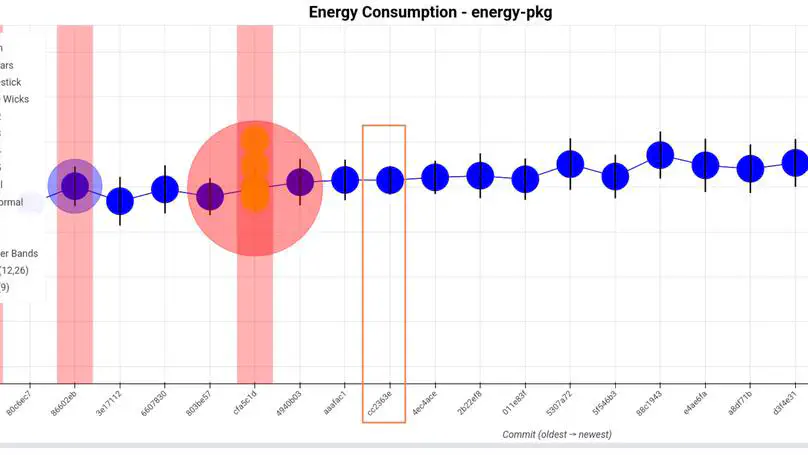

Green software engineering is emerging as a crucial response to the rising energy impact of digital technologies, which may soon rival aviation and shipping combined. While several tools aim to help developers track energy consumption and detect regressions, they all have their own limitations. This motivated the development of EnergyTrackr, a fully modular and automated tool designed to detect statistically significant energy changes.

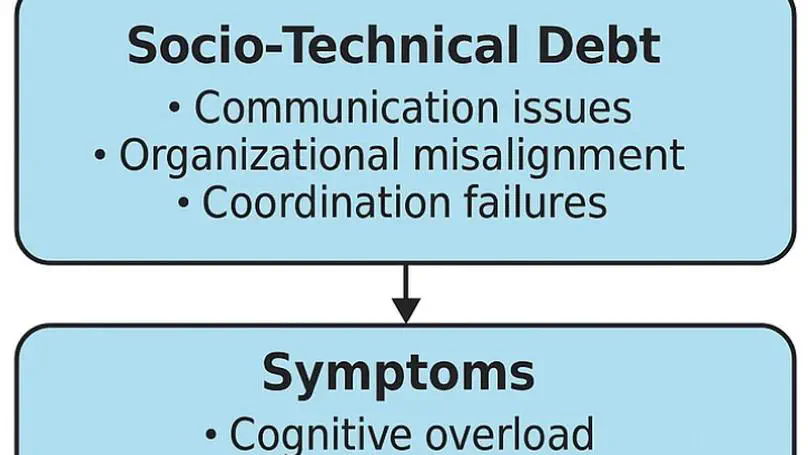

This thesis explores the impact of socio-technical debt on developer well-being. It combines two approaches: a quantitative approach (a structured questionnaire and a perceived stress scale) and a qualitative approach (a thematic analysis of open-ended responses). This study highlights existing links between perceived debt, social tensions, and workplace stress.

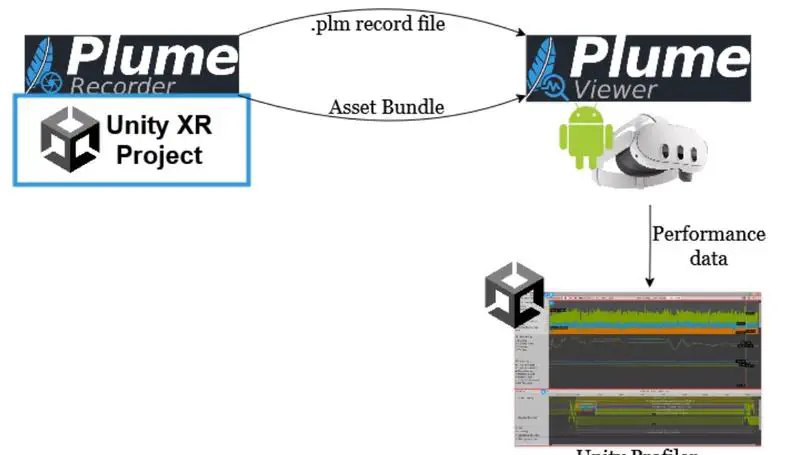

Software engineering for mixed reality headsets is still in its early genesis even though the required hardware for it has been available for several decades. Existing methods to streamline development are still limited and developers often find themselves doing things manually. Mixed Reality system testing, for example, are often done by hand by developers who have to wear the headsets and do the testing as if they were an end user. This is a significant time sink in the development process.

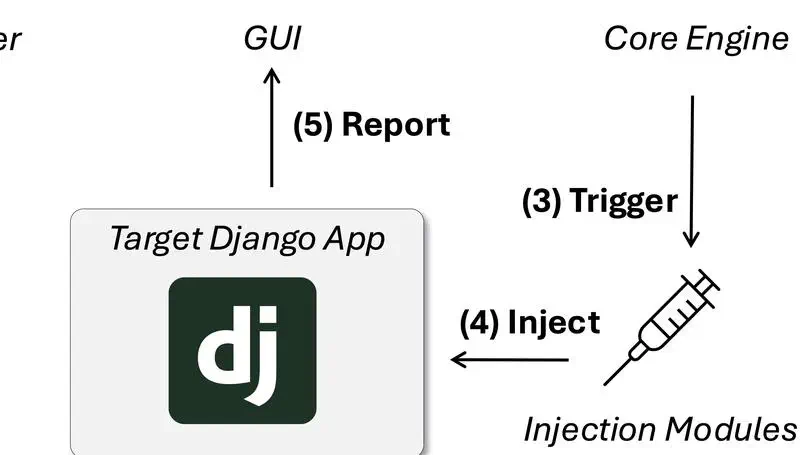

This thesis presents the development of a configurable tool that automatically injects web application vulnerabilities into existing Django codebases to support cybersecurity education. The primary goal is to enhance hands-on learning by allowing educators and students to engage with realistic, production-like environments that incorporate well-known security flaws, specifically drawn from the OWASP Top Ten 2021. Instead of generating synthetic applications, the tool modifies authentic Django projects, ensuring pedagogical relevance and structural fidelity.

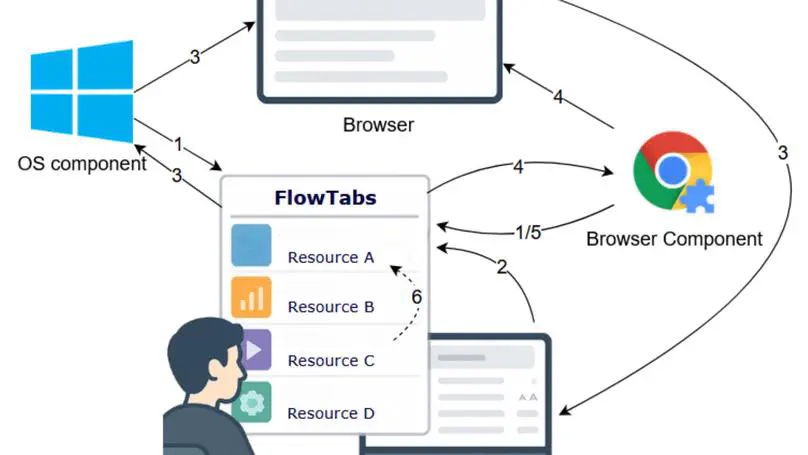

This master thesis explores the design and validation of a context management tool integrated into a development environment. Developers constantly use various resources (documentation, terminal, development tools, etc.), which forces them to frequently switch between their development environment and these resources to meet their needs. This often leads to interruptions, distractions, and consequently, a drop in productivity. To address this issue, the study proposes a solution in the form of an extension for the Visual Studio Code IDE, called FlowTabs. This extension brings resources together into a unified interface and uses a relevance algorithm that adapts to the developer’s behavior to suggest the most appropriate resources. User testing has shown that it integrates well into the development environment, offering a satisfactory developer experience, cognitive comfort and effective resource management. This work thus provides an original context management solution, directly embedded in a development environment.

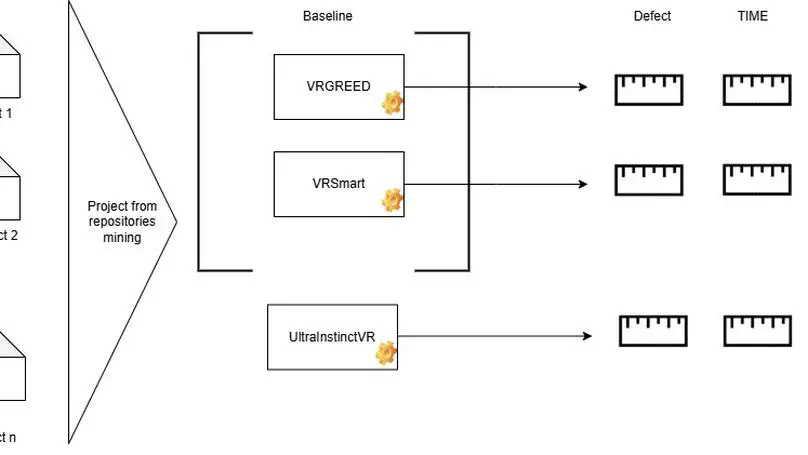

Virtual Reality (VR) is increasingly recognized as a technology with substantial commercial potential, progressively integrated into a wide array of everyday applications and supported by an expanding ecosystem of immersive devices. Despite this growth, the long-term adoption and maintenance of VR applications remain limited, particularly due to the lack of formalized software engineering practices adapted to the unique characteristics of VR environments. Among these challenges, the absence of effective, systematic, and reproducible tools for interaction testing constitutes a significant barrier to ensuring application quality and user experience.